If you're curious about what GPT stands for, it's "Generative Pre-trained Transformer" - quite a mouthful.

The only non-evident word here is "Transformer". Nothing to do with Optimus Prime - a Transformer is a type of neural network introduced in a paper in 2017 by a researcher at Google.

Some of the key breakthroughs of transformers:

- weighing the importance of different input words

- ability to be trained on very large amounts of inputs

- ability to be accelerated (GPU) for fast results

If you break it down:

Generative: generates content.

Pre-trained: trained on very large amounts of text.

Transformer: the neural network that process the input to output.

Let's see how naming evolves as technology gets more widely adopted.

10 Oct 2022

Generative Pre-trained Transformer 3 (GPT-3) was launched in beta in June 2020 and uses 175 billion parameters!

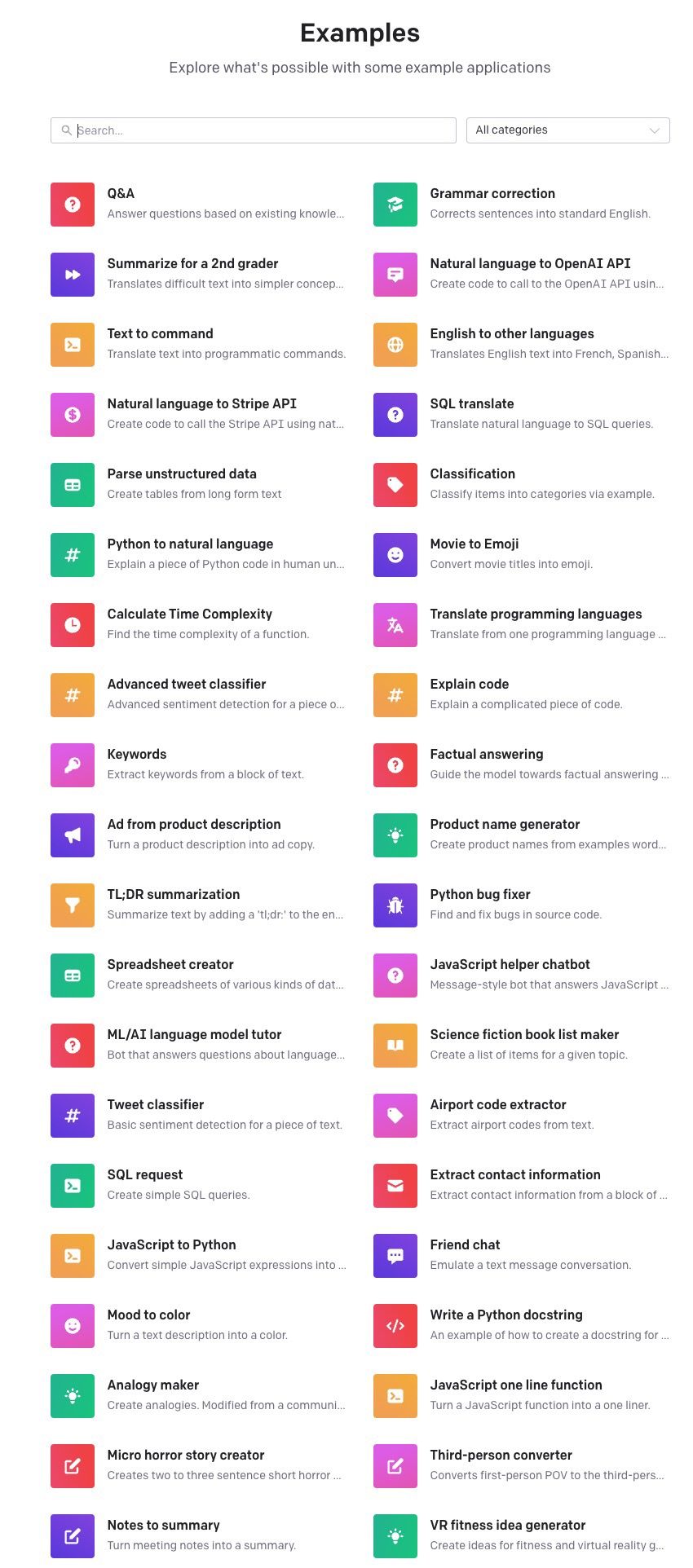

Playground

available models:

Pricing

Getting started

import json

import openai

from gpt import GPT

from gpt import Example

openai.api_key = data["API_KEY"]

with open('GPT_SECRET_KEY.json') as f:

data = json.load(f)

gpt = GPT(engine="davinci",

temperature=0.5,

max_tokens=100)

use as:

## Example

prompt = "xxxxx"

output = gpt.submit_request(prompt)

print(output.choices[0].text)

## or

prompt = "xxxxx"

print(gpt.get_top_reply(prompt))

GPT Index: connect GPT with my own data

2 Feb 2023

"GPT Index" is a toolkit of data structures designed to help you connect LLM's with your external data.

Resources

Tokens

GPT-4

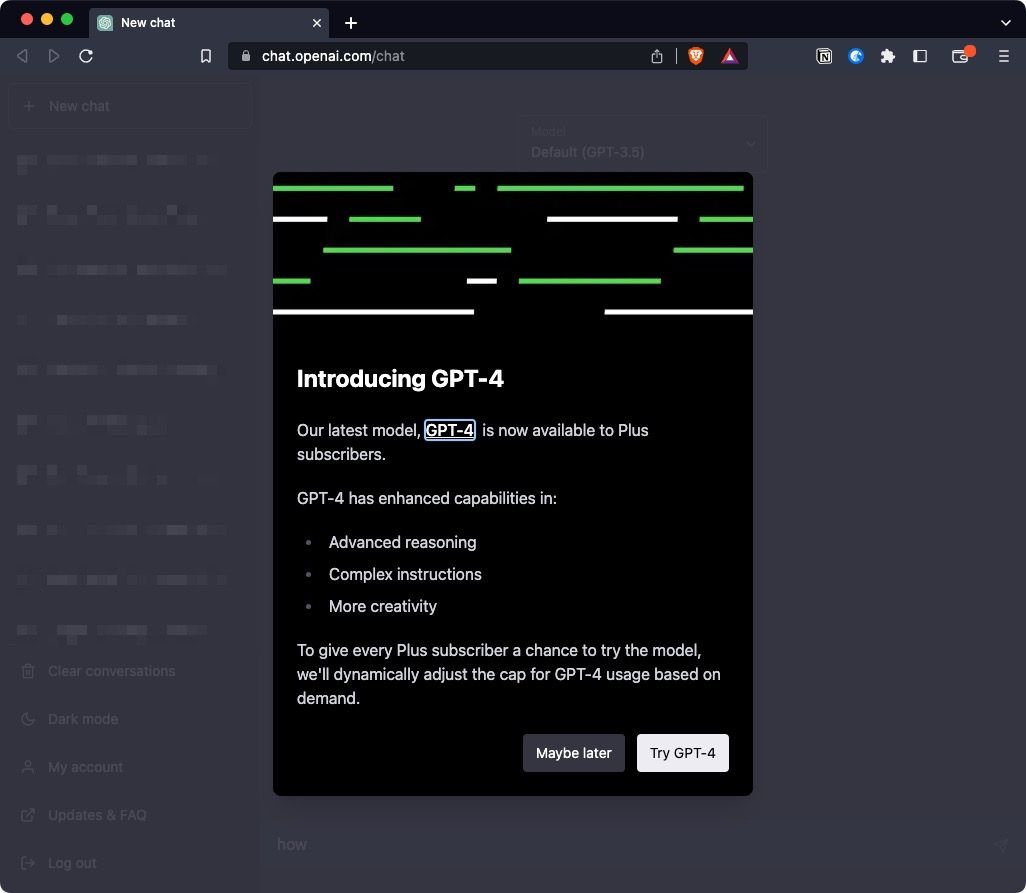

11 Mar 2023

First confirmation of upcoming GPT-4 model:

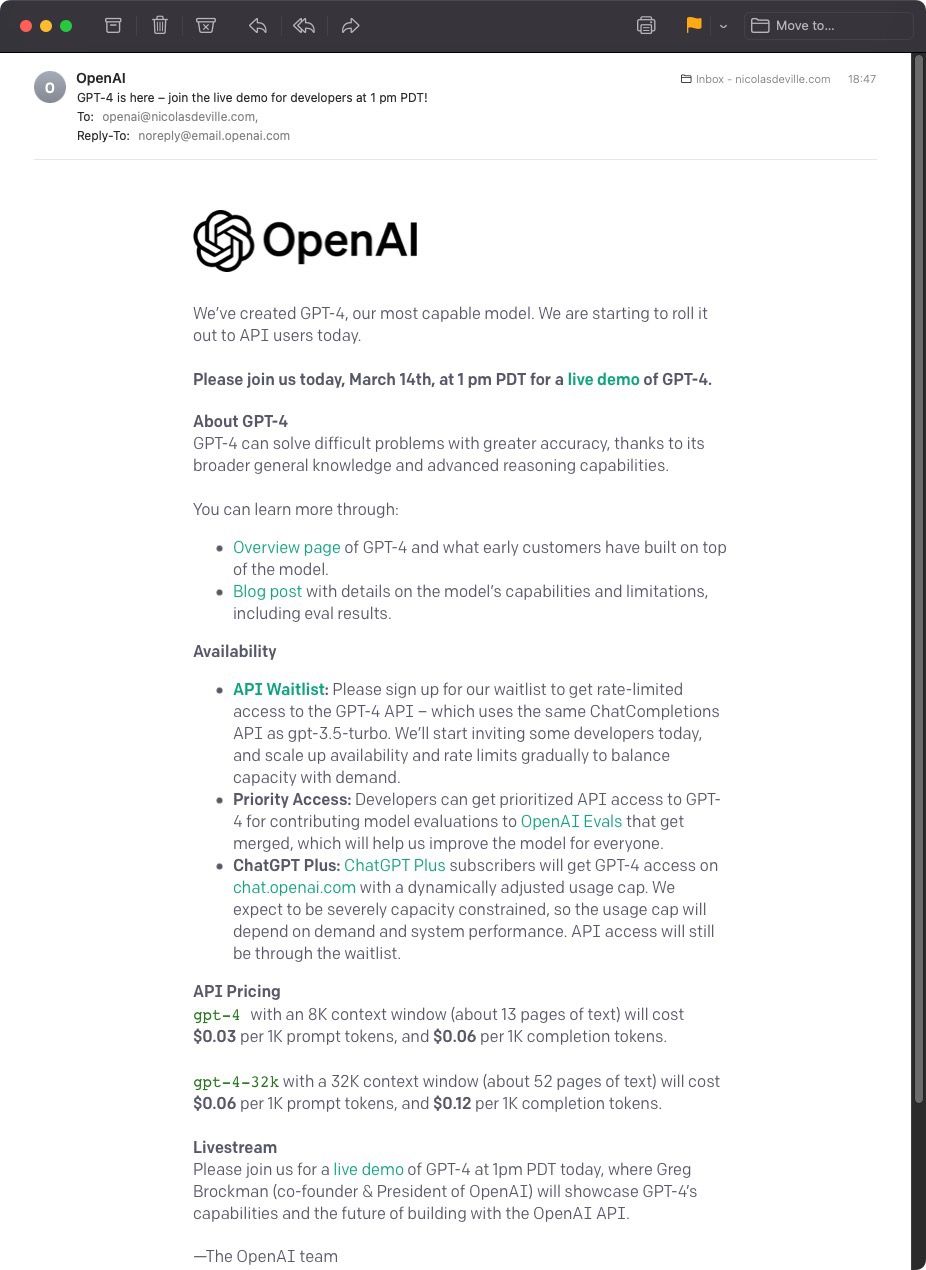

14 Mar 2023

Received launch email & invitation to join live launch stream:

Live Launch:

I like that it's the somewhat nervous CTO who presents - not a marketing presentation, yet still very polished.

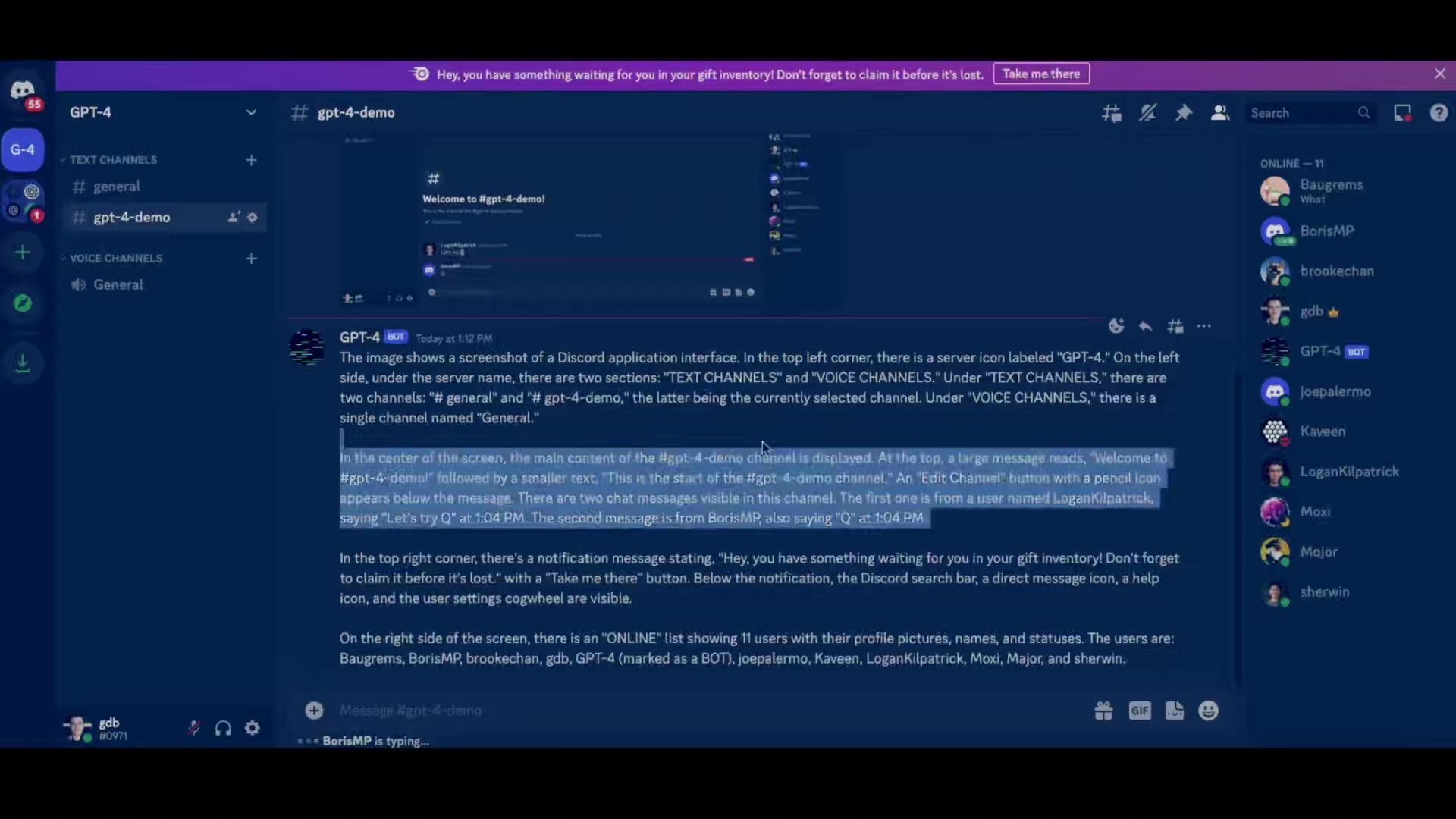

Live interaction with the audience is via Discord.

Despite its status as a potential future $1 trillion company, OpenAI confirms and comes across as a research-focused, developer-oriented company.

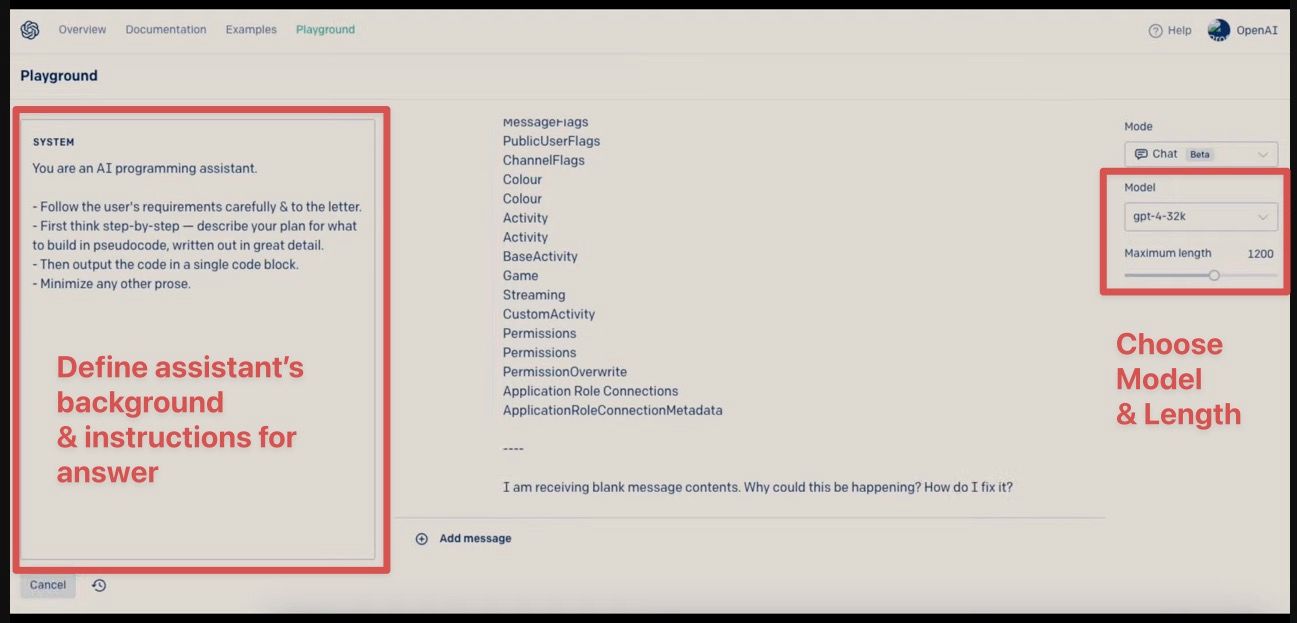

GPT-4's Playground (mimiced in ChatGPT Plus soon?)

1st demo, possible with 4, impossible with 3.5: "summarise a 1-page text in one line, only with words starting with 'q'".

separate inputs

Separate input for pre-prompt instructions ("You are X with Y attributes).

Main input allows for multi-step instructions, including long-form text (eg. code, article, etc).

better results

Code generation becomes much better, given more context.

Understands context (eg ran in Jupyter) & dependencies (eg. missing libraries & how to install them).

Dirty trick: address the limitation of data cutoff in 2021 by adding missing data to the model.

eg. add API documentation updates to the prompt.

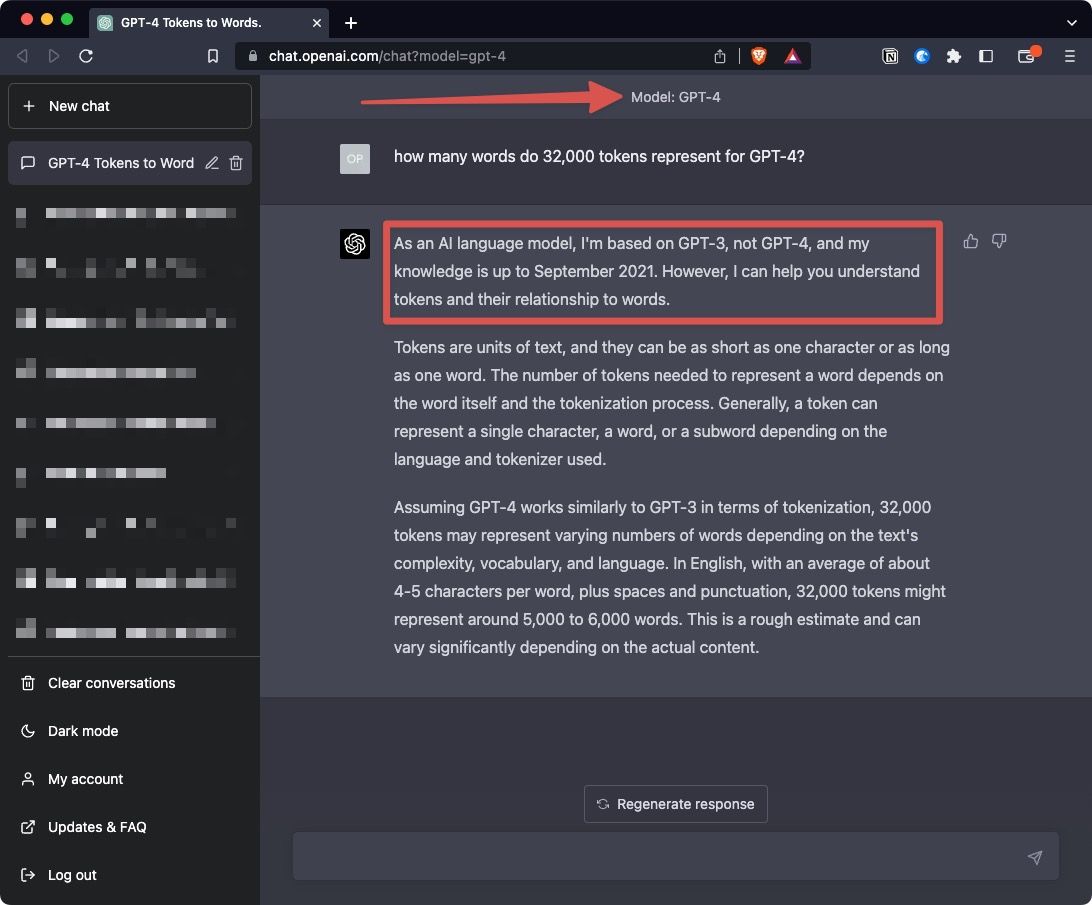

32,000 tokens accepted.

ND: ChatGPT says that's 5-6k words. But it should be ~25k words.

Good showcase of the capabilities of GPT-4 - but hope future model will either be connected Live to the internet (ie daily run?) or have at least a shorter window of time between data ingestions cutoff and Production use.

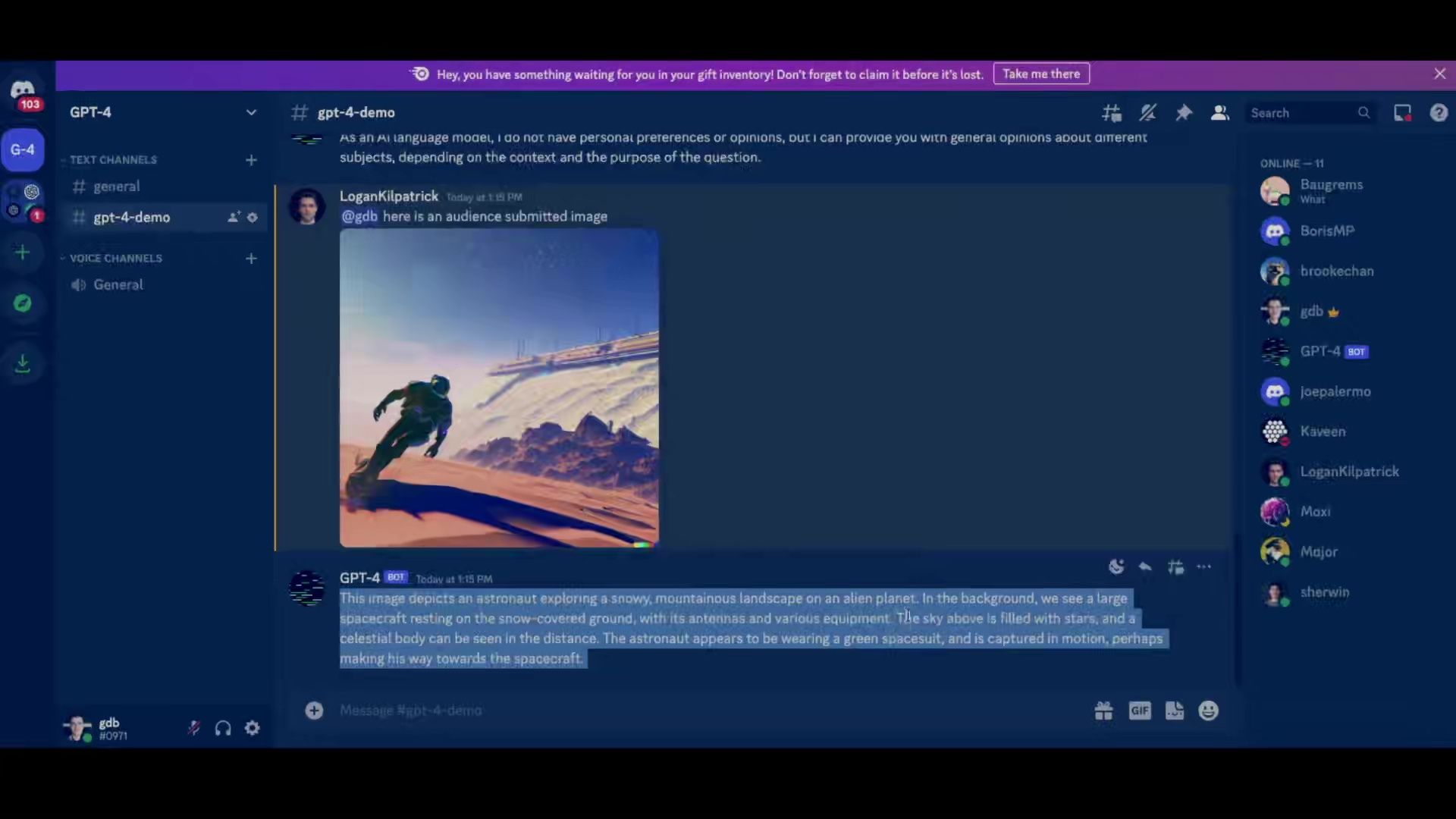

multi-modal input - text & images

"Vision Model", not just "Language Model" - still in Beta with one partner.

Demo of upcoming new feature generating a response with multi-modal input: image + text.

Not available yet.

description of image

Here: submits a screenshot of the Live Discord channel and asks GPT-4 to describe in details what this screenshot contains. Good results.

Live demo in Discord: user submits images with questions a dedicated channel, with live answers from GPT-4.

That's a cool demo format! (probably nerve-wracking for the organisers). Feels "real".

picture to website

Demo: from taking a picture to a website with a prompt.

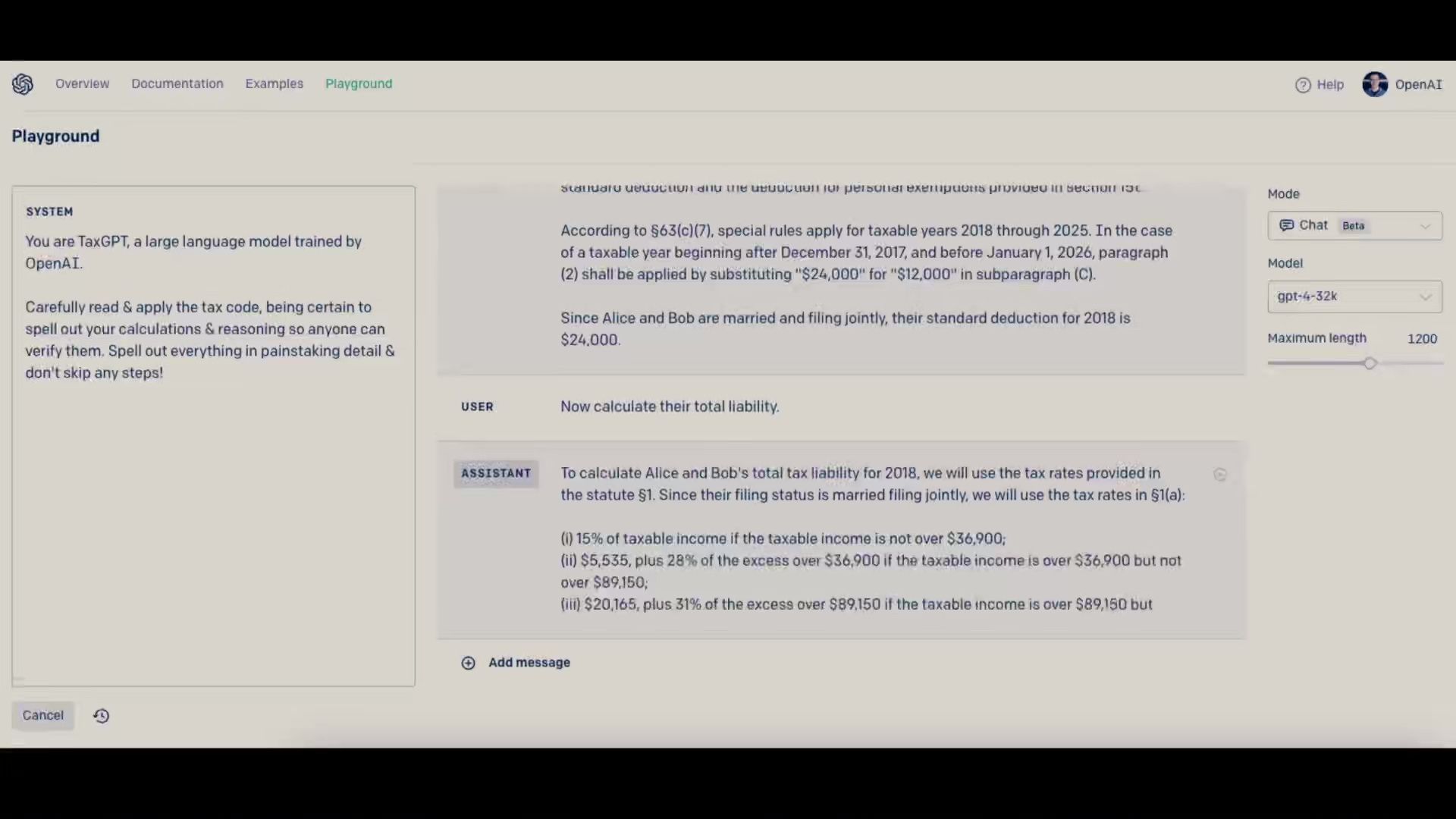

tax code demo

Demo: provide tax code & question - answers with result + step-by-step logic.

Impressive calculations (eg taxes) considering it's "mental calculation" (prediction) - not connected to a calculator (potential future improvement).

Stream ends. 25mns.

thoughts

No slide, overall presentation, etc..

Just a simple & effective developer-focused livestream.

I hope "Open"AI will communicate more/better about the bigger picture - for the company, its technology, and its impact on the world.

(beyond what Sam Altman tweets @sama)

Available in ChatGPT Plus immediately

Immediately available in ChatGPT Plus 😁

"GPT-4 currently has a cap of 100 messages every 4 hours"

I'm not sure what to test right now 😂

Might come back to it tomorrow...

Well, perhaps not..

First idea ended up very "meta" - it doesn't know about itself, because GPT-4 came out after 2021... but it also says it's based on GPT-3.. perhaps just a bug and the model is not properly activated yet? 🤔

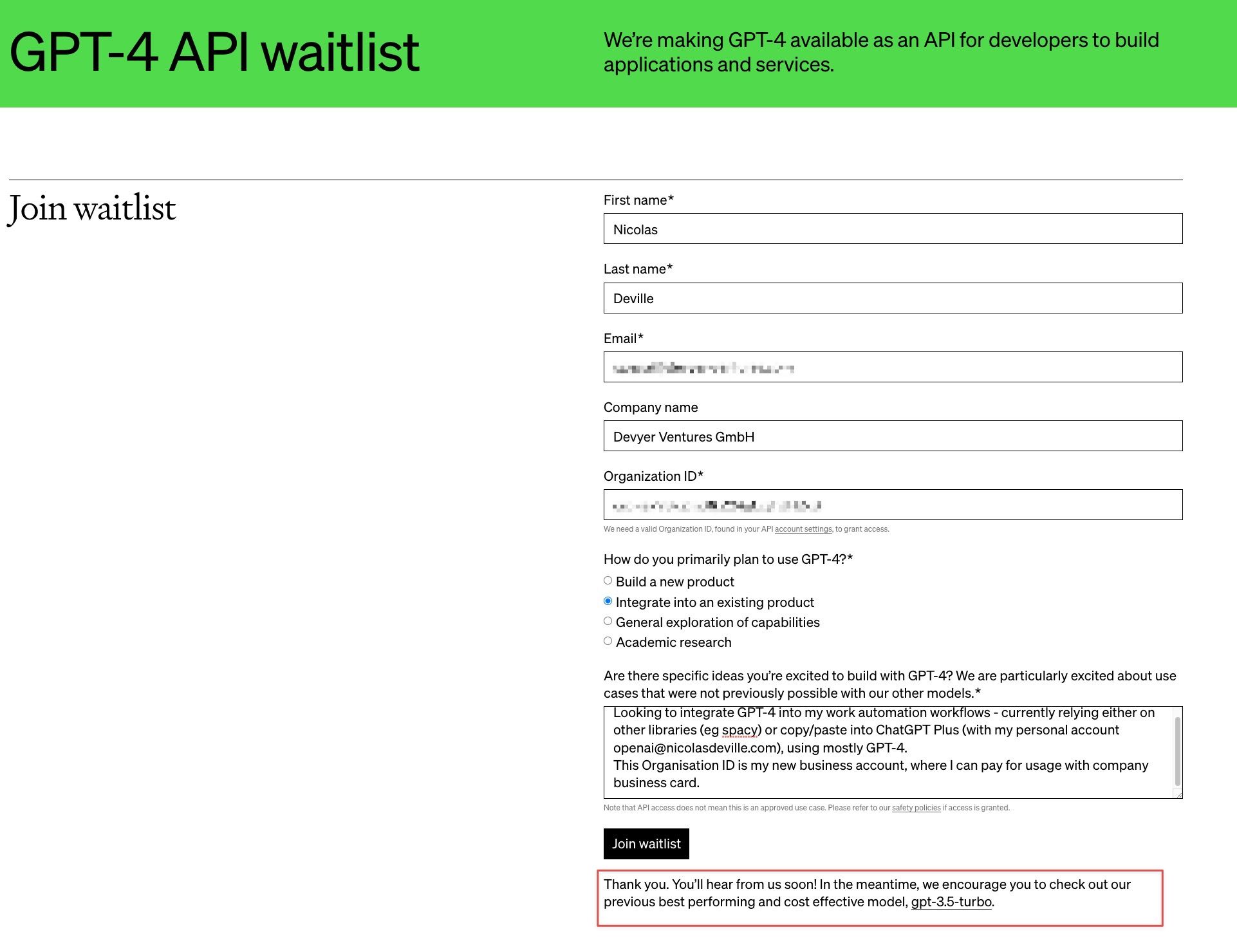

GPT-4 API

15 Apr 2023

Created a new business account and applied for GPT-4 API access.

08 May 2023

Reapplied with other account using BtoBSales.EU, explaining as:

Exploring use cases for Sales purposes, both in-house and as a potential product.

Paying user of both Github Copilot & ChatGPT Plus, but looking forward to 32k tokens capacity, to help rework many long Python scrips (1,500+ lines of code).